I need to tell you about something that’s been keeping me up at night lately, and honestly, it should concern you too. There’s a new breed of scam making the rounds, and it’s so convincing that even tech-savvy people are falling for it. We’re talking about AI voice cloning scams, where criminals can fake your grandchild’s voice so perfectly that you’d swear on your life it was them calling you in a panic, begging for money.

Let me paint you a picture. Your phone rings at 11 PM. It’s your grandson. He’s crying, his voice is shaking, and he tells you he’s been in a car accident. He needs £5,000 immediately for bail or medical bills, and please, please don’t tell Mum and Dad because he doesn’t want to worry them. Your heart is racing. You can hear him. It sounds exactly like him. Every inflection, every little quirk in his voice. So you send the money. Except here’s the kicker: your grandson is fast asleep in his bed three streets over, and you’ve just been scammed.

This isn’t science fiction anymore. This is happening right now, today, to people just like you and me. And I’m going to walk you through everything you need to know about this technology, not to scare you (well, maybe a little), but to arm you with knowledge. Because knowledge, my friend, is the best defence we’ve got.

Why This Technology Matters More Than You Think

Voice cloning technology matters because our voices are supposed to be uniquely ours. They’re like fingerprints, except warmer, more personal. When your daughter rings you, you know it’s her within two words. When your best mate calls, you recognize them instantly. We’ve spent our entire lives trusting our ears, and now that trust is being weaponized against us.

But here’s the thing: the technology itself isn’t evil. Voice cloning has legitimate, brilliant uses. It’s helping people who’ve lost their voice to illness speak again. It’s allowing actors to dub films in multiple languages without spending weeks in a recording studio. It’s helping authors create audiobooks more efficiently. Stephen Hawking used voice synthesis technology for decades, and modern voice cloning is the sophisticated grandchild of that same principle.

The problem isn’t the technology. It’s the people who’ve figured out how to twist something useful into something harmful. It’s like how a hammer can build a house or break a window. Same tool, very different intentions.

What Voice Cloning Is Used For (And What It Isn’t)

Let’s get this straight from the start. Legitimate voice cloning technology serves some genuinely helpful purposes. Film studios use it to fix dialogue without calling actors back for expensive reshoots. Medical researchers use it to help people with ALS or throat cancer communicate in voices that sound like their own. Podcast producers use it to correct mistakes without re-recording entire episodes.

What it’s NOT meant for is pretending to be someone else to extract money, create fake evidence, or manipulate people emotionally. It’s not meant for the grandparent scam, where criminals impersonate family members in distress. But just because something isn’t meant for a purpose doesn’t mean people won’t use it that way. People aren’t meant to drive cars into buildings either, but it happens.

The legitimate uses require consent. If a company wants to clone your voice, you sign paperwork, you record specific phrases, you agree to terms. The scammers? They just need a few seconds of audio from your grandchild’s Instagram story, and they’re off to the races.

The Old Days: Before We Could Fake Voices This Well

Remember those ransom calls in old films where the kidnapper would hold a newspaper over the phone to disguise their voice? Or use one of those voice changer toys that made you sound like a robot? That was the extent of voice manipulation for decades. It was obvious, clunky, and frankly a bit rubbish.

Before voice cloning, scammers relied on what I call the “theatre method.” They’d do their research, learn about your family, and then simply pretend to be your relative. They’d call you up, claim to be your grandson, and hope you wouldn’t notice that the voice wasn’t quite right. They’d cough a lot, claim they had a cold, or say the phone line was terrible. They relied on your emotional state, your worry, and your desire to help to override your suspicion.

These scams worked surprisingly often because they exploited something fundamental about human nature: we want to believe our loved ones when they’re in trouble. We want to help. And in a moment of panic, we don’t always think clearly. But at least back then, if you were calm enough to really listen, you could tell something was off. The voice didn’t match. The speech patterns were wrong.

Those days are gone.

The Evolution: From Robot Voices to Perfect Copies

Let me walk you through how we got here, and I promise to keep it simple.

The Early Days: Text-to-Speech (1960s-2000s)

The first computer-generated voices sounded like, well, computers. Remember those old GPS systems that pronounced everything wrong? “Turn left at War-sester Street” when it meant Worcester? That was early text-to-speech technology. It could read words, but it had no soul, no natural rhythm. You’d never mistake it for a real person.

The Middle Years: Better Synthesis (2000s-2016)

Technology improved. Companies like Nuance (you might know them from Siri’s original voice) created systems that sounded more human. They recorded real voice actors saying thousands of phrases, then chopped up those recordings and stitched them together based on what needed to be said. It was like creating a sentence from magazine cutouts, except with sound.

This was better, more natural, but still obviously synthetic. You could hear the joins, the slightly robotic quality. It was fine for GPS directions or automated phone systems, but you’d never mistake it for your actual grandchild.

The Revolution: Deep Learning (2016-2020)

Then everything changed. Researchers developed something called deep learning neural networks. Without getting too technical, imagine teaching a computer to understand the patterns in how humans speak by showing it thousands of hours of recordings. The computer learns not just what words sound like, but how humans breathe, pause, add emotion, change pitch. It learns the music of human speech.

In 2016, Google’s DeepMind released WaveNet, and suddenly computer voices sounded genuinely human. In 2018, Google demonstrated Duplex, an AI that could ring a restaurant and book a table, and the person on the other end had no idea they were talking to a machine.

This was impressive. This was also terrifying.

The Current Era: Clone Anyone (2020-Present)

Now we’re in the wild west. Modern AI voice cloning systems need as little as three seconds of someone’s voice to create a convincing clone. Three seconds. That’s shorter than most Instagram stories. That’s one sentence from a TikTok video. That’s a voicemail greeting.

Companies like ElevenLabs, Descript, and others offer this technology commercially. The legitimate services try to build in safeguards, requiring consent and verification. But the cat’s out of the bag. The underlying research is published. People with coding skills can build their own systems. And criminals? They absolutely have.

The benefit over previous versions is almost too good. These systems capture everything: the accent, the speech impediment, the way someone’s voice goes up at the end of questions, the little verbal tics we all have. It’s not just mimicry. It’s replication.

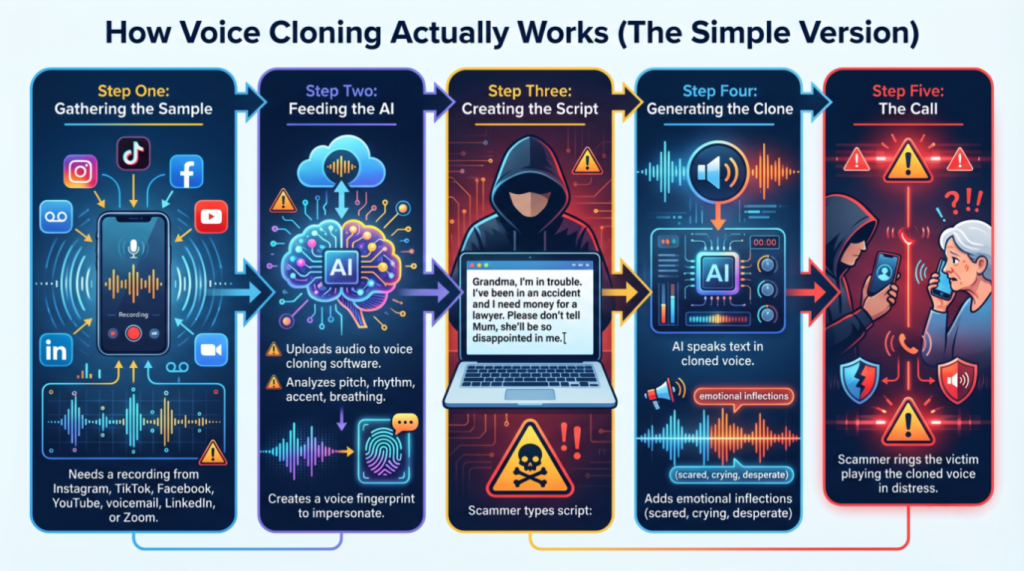

How Voice Cloning Actually Works (The Simple Version)

Right, let me break this down without making your eyes glaze over.

Step One: Gathering the Sample

First, the scammer needs a recording of your grandchild’s voice. This is easier than you’d think. If your grandchild has posted videos on Instagram, TikTok, Facebook, or YouTube, that’s all they need. Even a voicemail greeting works. They might scrape LinkedIn videos, university presentations, or even recorded Zoom calls if they can access them.

Step Two: Feeding the AI

They upload this audio to voice cloning software. The AI analyses everything about how your grandchild speaks. It’s like the software is learning to play your grandchild’s voice like an instrument. It maps out the pitch range, the rhythm, the accent, the breathing patterns. It creates what’s essentially a voice fingerprint, except instead of identifying someone, it’s learning to impersonate them.

Step Three: Creating the Script

The scammer types out what they want to say. “Grandma, I’m in trouble. I’ve been in an accident and I need money for a lawyer. Please don’t tell Mum, she’ll be so disappointed in me.”

Step Four: Generating the Clone

The AI takes that text and speaks it in your grandchild’s voice. Modern systems can even add emotional inflections. They can make the voice sound scared, crying, desperate. Some systems let you adjust the emotion in real-time, like turning a dial.

Step Five: The Call

They ring you. Sometimes they use the cloned voice directly through a computer. Sometimes they record it first and play it back. Either way, you hear what sounds exactly like your grandchild in distress.

It’s that simple. And that’s what makes it so dangerous.

What the Future Holds (And Why I’m Nervous)

I wish I could tell you this technology is going to get harder to access or less convincing. I can’t. The truth is, it’s going to get easier and better.

We’re heading toward a world where someone could clone your voice in real-time during a phone call. You’d speak in English, and the person on the other end would hear you speaking fluent Mandarin in your own voice. That’s the positive use case. The negative? Someone could intercept your call and change what you’re saying entirely, in your voice.

Video is next. We already have deepfakes, videos where people’s faces are convincingly swapped or manipulated. Combine that with voice cloning, and you could have a video call with someone who looks and sounds exactly like your grandchild but isn’t.

But here’s a glimmer of hope: we’re also developing better detection methods. Researchers are working on AI systems that can spot cloned voices by detecting tiny artifacts that human ears can’t catch. Some companies are exploring “voice watermarking,” where legitimate recordings have invisible markers proving they’re authentic.

The future will likely include verification systems. Imagine having a secret code word with your family that you can ask for during suspicious calls. Or biometric voice authentication that can’t be cloned because it measures physical characteristics of your vocal cords, not just how you sound.

But we’re not there yet. Right now, in 2026, the scammers have the upper hand.

Security, Vulnerabilities, and Protecting Yourself

This is the section you need to pay attention to. I’m going to give you practical ways to protect yourself from AI voice cloning scams and the grandparent scam in particular.

Limit Your Digital Voice Footprint

I know, I know. You want to share videos of your holiday or your thoughts on the local council meeting. But every video you post publicly is a potential voice sample. Consider making your social media accounts private. Be selective about what videos you share publicly. If you don’t need a video to be public, don’t make it public.

The same goes for your grandchildren. If they’re posting videos constantly, they’re creating a voice library for potential scammers. Have a conversation with them about privacy settings.

Establish a Family Code Word

This is old-school spy craft, but it works. Agree on a code word or phrase with your family members that only you know. If someone calls claiming to be your grandson and asking for money, ask for the code word. A scammer using a cloned voice won’t know it.

Make it something memorable but not guessable. Not “password” or your dog’s name. Something like “purple elephant” or “Tuesday’s child.” Change it every few months.

Trust Your Instincts (But Verify)

If something feels off, it probably is. Even the best voice clones sometimes have tells: slightly odd phrasing, unusual vocabulary, or requests that don’t quite fit the person’s character.

But here’s the crucial bit: even if the call sounds completely legitimate, verify before you act. If your grandchild calls asking for money, tell them you’ll call them right back. Then hang up and call their number directly. Don’t use redial, actually dial their number yourself. If they answer and know nothing about the call, you’ve just dodged a scam.

Never Send Money Immediately

Scammers rely on urgency. They’ll tell you it’s an emergency, that you need to act now, that you can’t tell anyone else. That’s the red flag right there. Legitimate emergencies still allow for a five-minute verification call.

No matter how desperate the voice sounds, tell them you need to verify with another family member first. A real grandchild in trouble will understand. A scammer will get aggressive or hang up.

Be Wary of Payment Methods

Scammers love cryptocurrency, wire transfers, and gift cards because they’re irreversible and untraceable. If someone asks you to pay via these methods, alarm bells should be ringing. Even in a genuine emergency, these wouldn’t typically be the payment method.

Report Suspicious Calls

If you receive what you believe is a voice cloning scam, report it. In the UK, contact Action Fraud at 0300 123 2040 or report online at actionfraud.police.uk. In the US, report to the Federal Trade Commission at ReportFraud.ftc.gov. These reports help authorities track patterns and potentially catch the criminals.

Keep Your Personal Information Private

Scammers often combine voice cloning with social engineering. They research you online to make their story more convincing. They’ll mention real family members’ names, real places you’ve been, real events you’ve attended. Lock down your personal information. Don’t overshare on social media about your family structure, your routines, or your whereabouts.

Why You Should Take This Seriously (Without Panicking)

Look, I don’t want you to become paranoid and stop answering your phone. That’s not the point. The point is awareness. Understanding that this technology exists and that criminals are actively using it changes how you approach unexpected calls requesting money.

The statistics are sobering. Older people are disproportionately targeted by these scams, not because they’re less intelligent, but because they’re more trusting, more willing to help family, and often less familiar with what technology can do now.

You grew up in a world where seeing was believing and hearing was trusting. That world has changed. We now live in a reality where audio and video can be fabricated convincingly. It’s not your fault that technology has evolved this way, but it is your responsibility to adapt to it.

Think of it like learning about a new type of crime. When card skimmers first appeared at ATMs, people learned to check for them before inserting their cards. When phishing emails started, people learned to verify sender addresses. This is the same thing. It’s a new threat, and we’re learning new defences.

Wrapping This Up (And What You Need to Remember)

I’ve thrown a lot at you, so let me distil it down to what really matters.

AI voice cloning is real, it’s sophisticated, and criminals are using it right now to scam people by impersonating family members. The technology can replicate someone’s voice from just a few seconds of audio found on social media. These AI voice cloning scams are particularly effective as grandparent scams because they exploit our natural instinct to help family members in distress.

The technology itself isn’t evil. It has legitimate uses in medicine, entertainment, and accessibility. But like any powerful tool, it can be misused.

You can protect yourself. Establish code words with family. Verify before sending money. Trust your instincts. Limit public voice recordings. Never let urgency override verification. If someone calls claiming to be a family member in trouble, hang up and call them back directly on their known number.

The future will bring both better cloning technology and better detection methods. We’re in an arms race between scammers and security, and it’s going to be a long battle.

But here’s what I want you to take away more than anything: you’re not helpless. Knowledge is power, and now you know. You know what’s possible. You know what to watch for. You know how to verify.

Share this information with your friends. Talk to your family about establishing verification methods. Don’t feel embarrassed if you’ve fallen for a scam before, these are sophisticated operations designed by people who study human psychology for a living.

The world has changed, but we can change with it. We can be smart, cautious, and prepared without being fearful or isolated. We can use technology’s benefits while guarding against its dangers.

And the next time you get an unexpected call from a “grandchild” in trouble, you’ll know exactly what to do: hang up, call them back yourself, and verify before you act.

jhtujtStay safe out there. And for heaven’s sake, tell your grandchildren to make their Instagram accounts private.

Walter

Leave a Reply