Why This Matters More Than You Think

When I first heard people banging on about AI content editing, I thought it was just another tech fad, like those QR codes everyone insisted we’d use for everything back in 2011. Remember those? Yeah, me too. But here’s the thing, this AI-generated content business isn’t going anywhere. In fact, it’s becoming as fundamental to modern work as learning to use email was in the 1990s.

The collaboration between humans and AI isn’t about robots taking over your job. It’s about something far more practical and, dare I say, liberating. Think of it like this: remember when calculators became commonplace? Teachers panicked, parents worried, everyone said kids would forget how to do maths. But what actually happened? We stopped wasting time on tedious arithmetic and started solving more interesting problems. That’s exactly what’s happening with AI and content creation.

The truth is, AI-generated content is flooding the internet right now, whether we like it or not. And much of it is, frankly, rubbish. It reads like it was written by someone who learned English from instruction manuals and has never had an actual conversation. That’s where you come in. Learning to validate, edit, and enhance what AI produces isn’t just a nice skill to have anymore. It’s rapidly becoming essential, like knowing how to spot a dodgy email or understanding that the nice prince from Nigeria probably doesn’t need your help moving his fortune.

What It’s Actually Used For (And What It’s Not)

Content optimization through AI collaboration is brilliant for drafting initial versions of reports, generating product descriptions, creating email templates, summarizing long documents, brainstorming ideas, and producing first drafts of almost anything written. I use it myself when I need to bash out a quick outline or when I’m staring at a blank page at 11 PM wondering why I ever became a writer.

But here’s what it’s not for, and this is crucial. It’s not for replacing human judgment, creating content about sensitive topics without oversight, making important decisions without human review, or producing final-version content without editing. It’s definitely not for anything requiring genuine empathy, nuanced understanding of complex human situations, or original creative vision.

Think of AI as an incredibly keen intern who’s read everything on the internet but has never actually lived a day of real life. They can fetch information brilliantly, draft things quickly, and work all hours. But would you let them write your company’s response to a crisis? Would you trust them to craft your wedding speech? Of course not. They lack something essential, that human touch that comes from actually being human.

What We Had Before All This

Cast your mind back to the before-times. I’m talking about the early 2000s, even the 2010s. Creating content was entirely manual. If you needed a report, you wrote every single word yourself. If you wanted to check your grammar, you either had a colleague review it or you relied on basic spell-checkers that were about as sophisticated as a brick.

We had Microsoft Word’s grammar checker, which was notorious for being confidently wrong. We had Clippy, that insufferable paperclip who thought he was helping. We had style guides in actual physical books that we’d have to look things up in. Research meant hours in libraries or trawling through Google results one by one.

For businesses, content creation was expensive and slow. You needed writers, editors, proofreaders, all working in sequence. A simple brochure could take weeks. A website’s worth of content? Months. And if you needed something translated or adapted for different audiences? Well, I hope you had a decent budget.

The quality control process was entirely human too. Someone had to read every word, check every fact, ensure every comma was in the right place. It was thorough, sure, but it was also time-consuming and, let’s be honest, mind-numbingly tedious at times.

The Evolution: From Clunky to Clever

The Early Days: Autocomplete on Steroids (2010-2019)

The first attempts at AI writing assistance were, to put it kindly, enthusiastic but dim. We had tools like early versions of grammar checkers that could spot basic errors. They were like having a student teacher mark your work, someone who knew the rules but not when to break them.

Google’s search autocomplete was probably most people’s first taste of predictive text AI. It would guess what you were searching for, sometimes hilariously wrong, sometimes eerily accurate. Email platforms started suggesting quick replies like “Sounds good!” or “Thanks!” These were baby steps, but they showed us where things were heading.

The benefit over pure manual work? Speed, mostly. These tools could flag obvious errors faster than any human could read. But they were rigid, rule-based systems. They couldn’t understand context or nuance. They were following instructions, not thinking.

The Breakthrough: GPT-2 and the Dawn of Understanding (2019)

Then in 2019, OpenAI released GPT-2, and things got interesting. This was the first AI that could generate text that actually sounded like a human wrote it, at least in short bursts. It could understand context, maintain a thread of thought, and produce coherent paragraphs.

I remember the first time I saw it in action. Someone asked it to write a story about a detective investigating a missing sandwich, and it produced something genuinely entertaining. It wasn’t Shakespeare, but it wasn’t nonsense either. The technology could now grasp the relationship between ideas, not just match patterns.

The benefit over previous versions was profound. For the first time, we had AI that could actually assist with creative tasks, not just mechanical ones. It was like upgrading from a calculator to a computer.

The Revolution: ChatGPT and the Mainstream Moment (2022-2023)

When ChatGPT launched in November 2022, everything changed. Suddenly, everyone had access to AI that could write essays, debug code, explain complex topics, and hold conversations. Within five days, it had a million users. Within two months, 100 million.

This version could handle much longer contexts, maintain consistency across pages of text, and adapt its style based on instructions. It was like the difference between a basic mobile phone and a smartphone. Same basic function, but the capabilities expanded exponentially.

The real benefit? Accessibility. You didn’t need to be a programmer or work for a tech company. Anyone could type in a question and get a thoughtful, detailed response. That democratization changed the game entirely.

The Refinement: A Crowded, Capable Landscape (2023–2026)

GPT-4, released in March 2023, was a genuine step up. It handled longer conversations, followed nuanced instructions, and could work with images as well as text. Specialist tools flourished alongside it — Jasper for marketing, Copy.ai for ads, Grammarly’s AI layer for editing. The pitch was simple: use a specialist, not a generalist. It mostly held up.

Then the model wars kicked off. By 2024, OpenAI had released GPT-4o — faster, cheaper, multimodal — and followed it with reasoning models that actually think through a problem before answering rather than just pattern-matching at speed. Google’s Gemini matured from also-ran to genuine contender. Anthropic’s Claude built a reputation for thoughtful, less robotic writing. And DeepSeek arrived from China, matching frontier models at a fraction of the cost, rattling the industry’s assumption that two American labs had it all sewn up.

By early 2026, frontier models from OpenAI, Anthropic, and Google are extraordinarily capable — and the gap between them and specialist tools has narrowed sharply. Microsoft Copilot, baked into Word, Outlook, and Teams, has quietly become the AI writing assistant for a huge chunk of the working world, whether people chose it or not. Context windows have expanded dramatically, hallucination rates have fallen, and AI assistance is increasingly just there, woven into the tools you’re already using. The human editorial role hasn’t shrunk though. If anything, as the output gets more convincing, sharp human judgement matters more. A fluent, plausible-sounding wrong answer is far more dangerous than an obviously clunky one.

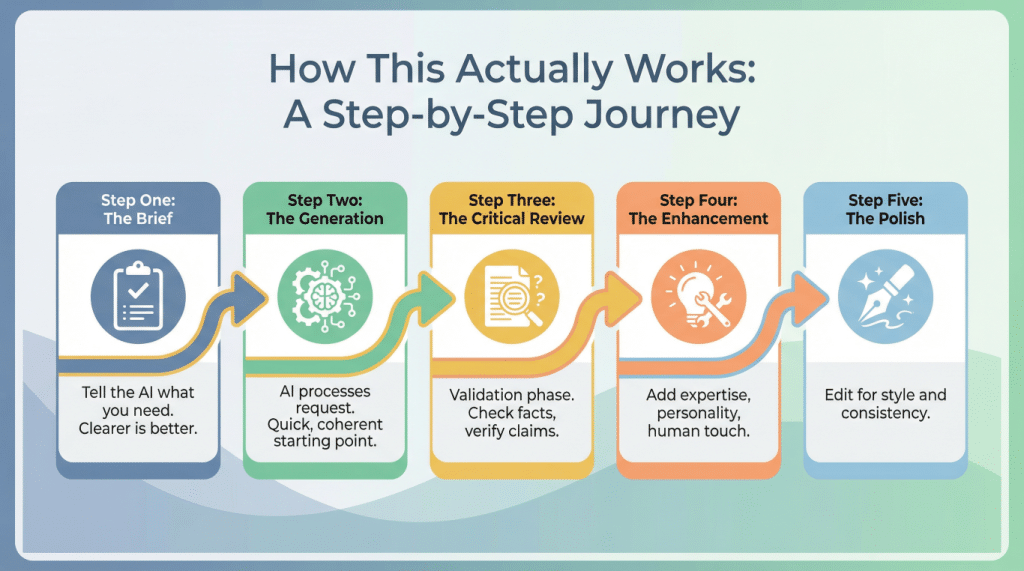

How This Actually Works: A Step-by-Step Journey

Let me walk you through how AI content editing and human collaboration actually works in practice. I’ll use a real example, something I did last week.

Step One: The Brief

You start by telling the AI what you need. This is more important than you might think. It’s like briefing a new employee, the clearer you are, the better the result. I needed a guide about home composting, so I wrote: “Create a 500-word beginner’s guide to home composting, friendly tone, for people with small gardens.”

Step Two: The Generation

The AI processes your request using something called a large language model. Now, I’m not going to bore you with the technical details, but essentially, it’s analyzed billions of words from the internet and learned patterns about how language works. It’s like someone who’s read every book in the library and can predict what word should come next based on context.

Within seconds, it produced a draft. Quick, coherent, covering all the basics. Was it perfect? Absolutely not. But it was a solid starting point, which would have taken me an hour to write from scratch.

Step Three: The Critical Review

Here’s where you earn your keep. You read through with a critical eye. Does this make sense? Is it accurate? Does it sound human? In my composting guide, the AI had suggested adding “meat and dairy products in moderation.” That’s actually terrible advice that would attract rats and create awful smells. The AI had clearly mixed up different composting methods.

This is the validation phase, and it’s crucial. You’re checking facts, verifying claims, and ensuring nothing misleading or incorrect has crept in. You’re also checking tone, flow, and whether it actually serves the intended purpose.

Step Four: The Enhancement

Now you make it better. You add your expertise, your personality, your human touch. I added a personal anecdote about my disastrous first attempt at composting (let’s just say the smell was memorable). I restructured some sections for better flow. I added specific product recommendations based on actual experience.

This is content optimization at its finest. You’re taking the AI’s efficient but generic draft and transforming it into something genuinely valuable. You’re adding the stuff AI can’t: real experience, emotional resonance, practical wisdom.

Step Five: The Polish

Finally, you edit for style and consistency. You ensure it matches your brand voice or personal style. You check that transitions work, that examples are relevant, that the whole thing hangs together as a coherent piece.

The result? Something that took 30 minutes instead of two hours, but still has your expertise and personality stamped all over it. That’s the power of collaboration.

What’s Coming Next

I reckon we’re heading towards something I call “continuous collaboration.” Instead of the current process where you generate, then edit, we’ll work alongside AI in real-time. Imagine writing a sentence, and the AI immediately suggests three ways to improve it, each with different strengths. You pick one, adapt it, move on.

We’ll likely see AI that understands your personal style so well it can mimic it convincingly. This sounds scary, but think of it as having a writing assistant who knows exactly how you like things done. Over time, it learns your preferences, your quirks, your pet peeves.

Verification will become more sophisticated too. AI will likely be able to fact-check itself in real-time, flagging claims that need human review and providing sources automatically. We might see AI that can say “I’m not certain about this bit, you should double-check it,” rather than confidently spouting nonsense.

The integration will deepen as well. Instead of separate tools, AI assistance will be woven into everything we use. Your email client, your word processor, your content management system, all working together with AI that understands context across all of them.

But here’s what I’m most excited about: I think we’ll see AI that’s genuinely better at collaboration. Current AI is like working with someone who never asks questions, never admits uncertainty, and always sounds confident even when they’re wrong. Future AI might actually say “I don’t understand what you want here, could you clarify?” That would be revolutionary.

The Serious Bit: Security and Why You Should Care

Right, time for the less fun but absolutely essential part. AI-generated content and the tools that create it come with real risks, and ignoring them would be daft.

Your Data Isn’t Always Private

When you put information into an AI tool, you’re often sending it to someone else’s servers. Depending on the service, that data might be used to train future versions of the AI, which means it could theoretically pop up in someone else’s results. I’m not trying to make you paranoid, but you absolutely should not put confidential business information, personal data, or anything sensitive into public AI tools without checking their privacy policy first.

Some services offer business tiers with better privacy protections, but they cost money. It’s worth it if you’re handling anything remotely confidential. Think of it like the difference between shouting your business plans in a crowded pub versus discussing them in a private meeting room.

Accuracy Isn’t Guaranteed

AI can and will make things up. It’s called “hallucination” in the industry, which sounds rather poetic for “confidently stating complete bollocks.” The AI doesn’t know it’s wrong, it just generates text that sounds plausible based on patterns it’s learned.

I’ve seen AI cite academic papers that don’t exist, quote statistics it invented, and describe historical events that never happened. It does this with the same confident tone it uses for accurate information. This is why the validation step I mentioned earlier isn’t optional, it’s essential.

Never, ever publish AI-generated content without checking the facts yourself. If it makes a claim, verify it. If it cites a source, look it up. If it sounds too good to be true, it probably is.

Bias Is Baked In

AI learns from the internet, and the internet is full of human biases, prejudices, and outdated attitudes. The AI doesn’t have opinions, but it has absorbed patterns from content that does. This means it can inadvertently reproduce stereotypes, make assumptions based on gender or ethnicity, or favor certain perspectives over others.

You need to watch for this, especially if you’re creating content about people or for diverse audiences. Read with a critical eye. Ask yourself if the content makes assumptions it shouldn’t, if it represents people fairly, if it’s inclusive without being patronizing.

Intellectual Property Gets Murky

The legal situation around AI-generated content is still being sorted out. If AI writes something, who owns it? If it was trained on copyrighted material, is the output derivative? These questions don’t have clear answers yet, and the law is struggling to catch up.

My advice? Always add enough of your own work that the content is clearly yours. Don’t just generate something and publish it unchanged. The editing and enhancement process isn’t just about quality, it’s also about ensuring you have a legitimate claim to the work.

The Dependency Risk

Here’s something people don’t talk about enough. The more we rely on these tools, the more vulnerable we become if they change, disappear, or get expensive. I’m old enough to remember when Google Reader shut down and thousands of people lost their primary way of consuming news. Don’t let AI tools become such a crutch that you can’t work without them.

Maintain your core skills. Keep writing from scratch sometimes. Practice your editing on purely human-written content. Think of AI as a power tool, incredibly useful, but you should still know how to do the job with hand tools if necessary.

Bringing It All Together

So here we are, at the end of our journey through AI-human collaboration. If you take nothing else away from this, remember this: AI is a tool, not a replacement. It’s brilliant at the heavy lifting, the first drafts, the tedious bits. But it needs you for the wisdom, the judgment, the human touch that makes content actually worth reading.

Learning to work with AI-generated content isn’t about becoming a tech expert. It’s about developing a new skill that sits alongside your existing expertise. You bring the knowledge, the experience, the understanding of what actually matters. The AI brings speed, consistency, and the ability to process vast amounts of information quickly.

The collaboration works because each side compensates for the other’s weaknesses. AI can’t tell if something is truly valuable or just sounds good. You can. You can’t process thousands of data points instantly or maintain perfect consistency across hundreds of pages. AI can.

Content optimization through this partnership isn’t just about making things faster, though that’s a lovely bonus. It’s about freeing you from the tedious parts of content creation so you can focus on the bits that actually require human intelligence, the strategy, the creativity, the understanding of what your audience really needs.

The future of work isn’t humans versus AI. It’s humans with AI versus humans without AI. And honestly? I’d rather be in the first group. Not because I’m desperate to embrace every new technology that comes along, I’m really not, but because I’ve seen how much better my work becomes when I use these tools properly.

AI content editing isn’t replacing editors, it’s making good editors more productive and helping people who aren’t trained editors produce better work. It’s democratizing skills that used to require years of training. That’s genuinely exciting, even for a cynical old sod like me.

So give it a try. Start small, maybe use AI to draft an email or summarize a long document. Practice the validation and editing process. Learn what AI is good at and where it falls flat. Develop your eye for spotting AI-generated nonsense and your skill at transforming decent drafts into excellent finished products.

Just remember to keep your critical thinking hat firmly on your head, check everything, add your own expertise, and never publish anything you wouldn’t be happy to put your name to. Do that, and you’ll find this collaboration business isn’t scary at all. It’s actually rather useful.

And isn’t that what good technology should be? Not flashy or intimidating, just genuinely, practically useful. Like a good cup of tea or a comfortable pair of shoes. Essential, reliable, and making your day just that bit better.

Now, if you’ll excuse me, I need to go check if the AI has invented any fake statistics in this article. Because that’s what responsible collaboration looks like.

Walter

Leave a Reply